Use the following checklists to help identify and resolve performance issues. Every environment and problem is unique — these checklists provide a baseline to guide you. If you are unable to resolve any problem areas or have questions, contact the Itential Product Support Team.

Performance issues you may encounter include:

To inspect network latency and packet loss, use mtr:

Example output:

In this report, packet loss is 0%. Average time to reach the destination is 1880ms, best is 235.2ms, worst is 2072ms.

For instances where the cause of a performance issue is unknown:

Fetch a count for jobs that have run more than 500 times (Job Metrics in Operations Manager is also available for Itential Platform 2021.2 and higher):

Fetch the jobs and tasks collection count for the full day plus every hour:

Replace UPPERBOUNDS and LOWERBOUNDS with epoch time values (in milliseconds) between two date timestamps.

Export job metrics data for analysis. Replace "JOB-ID-HERE" with the specific job ID:

Validate job run and completion rates:

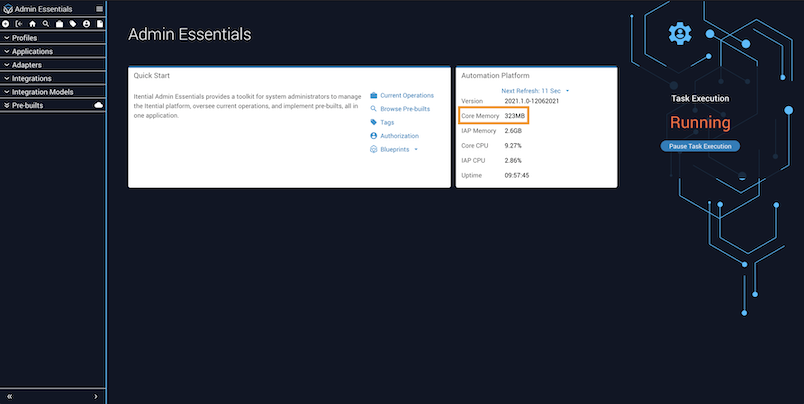

Use Admin Essentials to evaluate core memory usage.

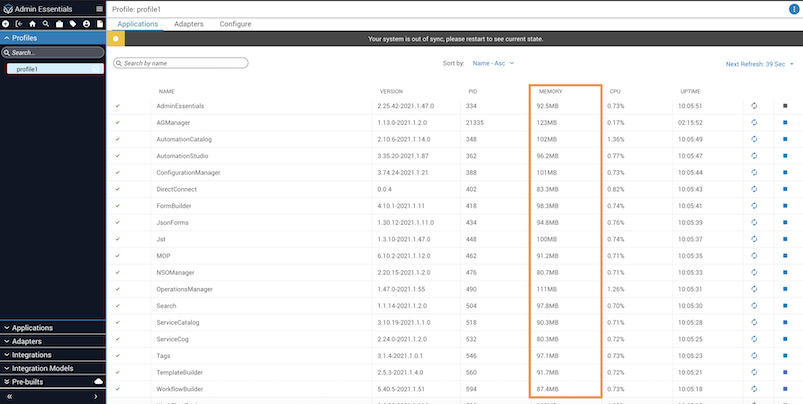

From the Profile view, you can also check memory usage for both Applications and Adapters, and compare it to the memory being used in your local server controls.

If the memory for an app keeps growing over time without decreasing, there may be a memory leak. Submit an ISD ticket with Itential for any product apps or adapters showing higher than expected memory use.